You Can't Optimize What You Can't See: Why AI Cost Observability Is the Missing Layer

AI costs are rising. We covered why in The AI Economic Paradox: cheaper tokens fuel elastic demand, agentic workflows multiply calls, and end-of-month invoices arrive with no explanation attached. The paradox is real. But the root cause isn't economic. It's operational. Organizations don't overspend on AI because inference is expensive. They overspend because they have no idea where the money goes.

Cost observability, meaning real, request-level, attributable cost tracking, is the missing layer. And without it, every other optimization strategy is flying blind.

The Invisible Bleed: Where AI Budgets Die

Cloud computing taught us this lesson once already. Gartner estimates that organizations waste 25-35% of their cloud spend due to lack of visibility. The FinOps Foundation didn't emerge because the cloud was expensive. It emerged because cloud spend was invisible, distributed across accounts, teams, and services with no single pane of glass.

AI has the same problem, but the cost dynamics are far more volatile. In the cloud, a VM running 24/7 is at least consistently expensive. In AI, a single workflow can cost $0.02 on Monday and $2.00 on Tuesday because the prompt was longer, the retrieval pulled more context, or someone swapped models in a config file nobody reviewed.

In a recent blog Tomasz Tunguz raised a similar issue: "Technology companies are adding a fourth component to engineering compensation: salary, bonus, options, & inference costs. Levels.fyi pegs the 75th percentile software engineer salary at $375k. Add $100k in inference & the fully loaded cost is $475k. That's 21% in tokens."

The result is what we called "the end-of-month AI bill shock" in our previous post. Finance flags an overage. Engineering kills the feature. The organization learns the wrong lesson, that "AI is too expensive", when the real lesson is: we didn't track AI costs the way we track everything else.

Consider the math. A support team ships an AI agent. In staging, each ticket triggers 3-5 model calls at roughly $0.01 each. Cost per ticket: $0.05. In production, longer threads, tool failures, and ambiguous queries push the agent to 15-30 calls per ticket. Some tickets hit 100+. Cost per ticket jumps to $0.50, occasionally $5.00. Multiply by 10,000 tickets per month. The projected $500/month line item becomes $15,000, and nobody knows until the invoice arrives.

Why Traditional Monitoring Fails AI Cost Management

Traditional APM tools like Datadog, New Relic, and Grafana excel at tracking requests and latency for deterministic systems. But AI cost dynamics break these models in specific ways.

Cost is per-token, not per-request. An LLM call costs radically different amounts depending on context length, model selection, and output verbosity. Two requests to the same endpoint, from the same user, in the same second, can differ by 100× in cost.

The most expensive tokens are invisible. The user's prompt is often the smallest part of the payload. System instructions, tool schemas, retrieval context, and agent scaffolding can represent 80-90% of input tokens. They're the dark matter of AI spend.

Model diversity makes aggregation meaningless. Organizations now use multiple models across multiple providers, each with different pricing and token counting methods. A single "total AI spend" number is as useful as a single "total cloud spend" number was in 2015: technically accurate, operationally useless.

Agentic workflows make costs non-linear. One user action can trigger planning, retrieval, tool calls, retries, and fallbacks. You can't forecast by multiplying "requests × price per request" when model calls per request vary by 10× or more.

AI needs its own cost observability layer, purpose-built for tokens, models, providers, and the specific economics of inference workloads.

The Four Levers of AI Cost Control

Before diving into tooling, it's worth naming the four levers that actually reduce AI costs at scale. Observability doesn't replace them. It makes them possible.

- Token compression. Reducing billed tokens per request by pruning irrelevant context and rewriting verbose prompts into compact state. Fewer tokens in, lower bill. But compression only works when you can measure it: per request, per workflow, over time.

- Model routing. Sending commodity tasks to cheaper models and reserving frontier models for tasks that need them. Savings can reach 10-50× per request, but routing decisions require cost data per workflow.

- Execution boundaries. Hard caps on call count, retry depth, and token budget per workflow. These prevent tail cases, the 1% of requests that consume 30% of spend, from dominating the month.

- Cost attribution. Assigning every dollar of AI spend to a team, feature, environment, and customer. Attribution turns cost from a shared overhead into an engineering metric.

Every lever depends on the same foundation: granular, real-time cost observability. Which is exactly what we built.

Inside the Edgee Observability Dashboard

The Edgee dashboard is designed around one principle: every dollar of AI spend should be explainable, attributable, and actionable. In real time, not at the end of the month.

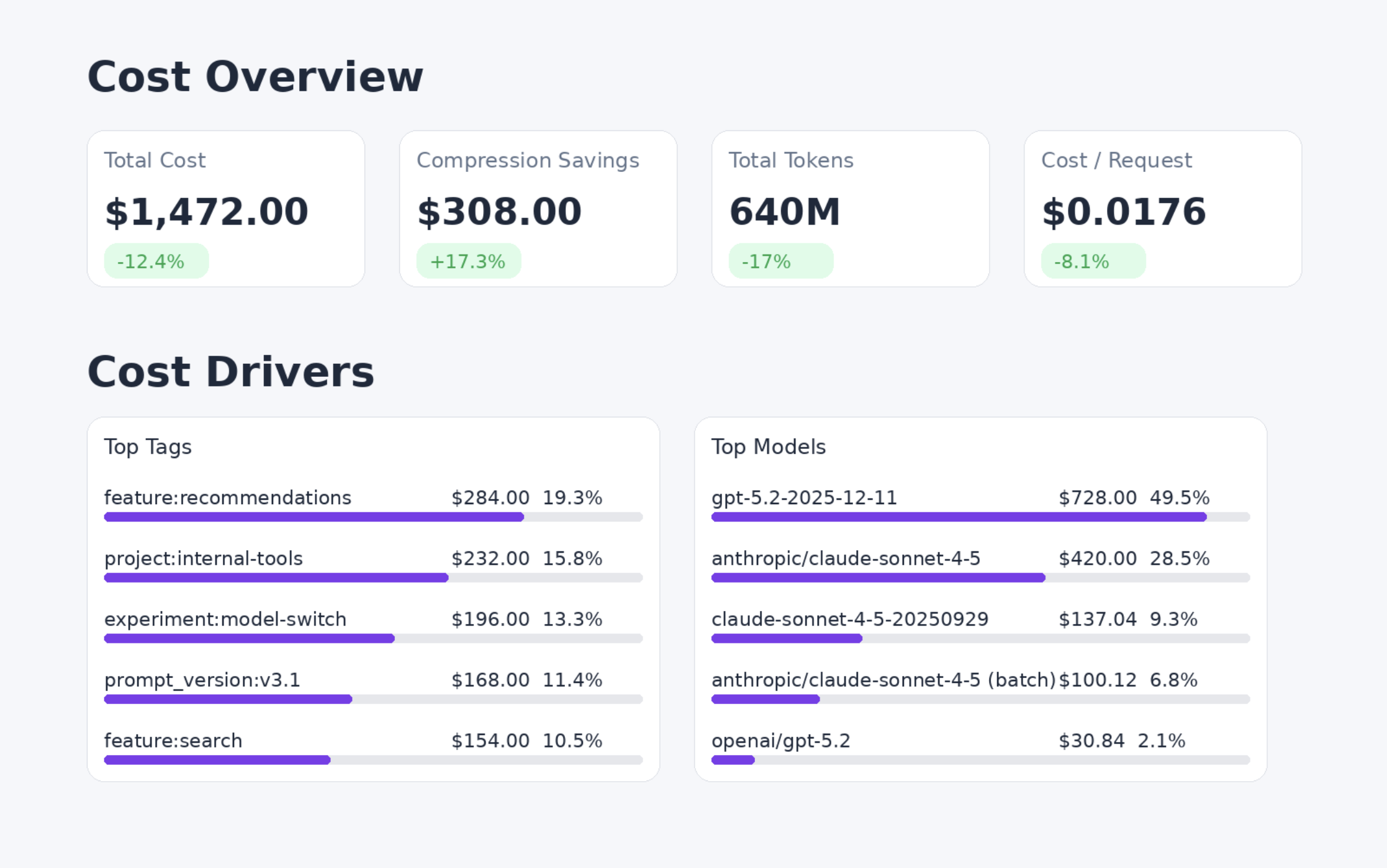

Six core metrics, one unified view

The dashboard surfaces six metrics that together give a complete picture of AI economics: Compression Savings ($), Cost ($), Input Tokens, Output Tokens, Cost per Request ($), and Request Count. Each metric can be viewed over time (daily, weekly, monthly) or filtered by API key and date range. This isn't a reporting tool you check quarterly. It's an operational view you use daily to catch cost drift before it compounds.

Multi-dimensional breakdowns

Every metric can be viewed by Time and split across three dimensions: Tag, Model, and Provider. Split by Model to see which models are driving cost. Is GPT-4o consuming 80% of budget while handling only 30% of requests? That's a routing opportunity. Split by Provider to compare cost efficiency across OpenAI, Anthropic, and Google for the same workload types. Split by Tag to attribute cost to the teams, features, and environments that generate it.

Request-level tagging for cost attribution

Tags are the backbone of cost governance. Every request sent through Edgee can carry multiple tags: environment (production, staging), feature (chat, summarization, rag-qa), team (team-backend, team-data), or customer (tenant-acme, user-123). Tags work natively across Edgee SDKs in TypeScript, Python, Go, and Rust, and via the x-edgee-tags header for teams using OpenAI or Anthropic SDKs directly. In the dashboard, tags become filters: drill from "total spend this month" down to "spend on the support-agent feature, in production, by team-support, on Claude Sonnet" in seconds. This is how you turn a $40,000 invoice into an actionable engineering conversation.

Compression metrics that close the optimization loop

Token compression is Edgee's most direct cost-saving feature, but savings mean nothing if you can't measure them. The dashboard tracks compression savings in dollars alongside raw cost, so you can see exactly how much you're saving and where you could save more. Every response includes original token count, compressed token count, tokens saved, and compression rate. Analyze compression effectiveness by use case (RAG vs. agents vs. document analysis), by model (which models compress best for your workload), and over time (catch compression degradation before it inflates costs). When a new prompt version adds verbose instructions and compression rates drop from 45% to 28%, the dashboard shows it immediately.

Performance monitoring tied to cost

Latency and cost are deeply linked in AI systems. A slow model that requires retries costs more than a fast one that succeeds first try. The dashboard tracks total request time, time-to-first-token, tokens per second, and edge processing overhead, all broken down by model, provider, and region. Error tracking covers provider errors, failover events, retry attempts, and error codes. When retry storms silently double your spend, performance monitoring is what makes the cost spike explainable.

Alerts and budgets for proactive cost control

Observability without guardrails is a spectator sport. Edgee's alerting system closes the loop with budget alerts at configurable thresholds (80%, 90%, 100% of monthly spend), automatic rate limiting when budgets are hit, anomaly detection for request spikes and compression degradation, and notifications via email and webhook. The difference between a $50,000 overage discovered at invoice time and a $5,000 spike caught within the hour is entirely a function of whether guardrails exist.

The Cost of Not Seeing

AI cost problems compound. A prompt that's 20% too verbose doesn't just cost 20% more. It fills the context window faster, reduces quality, increases retries, and doubles cost. A model that's overqualified for a task sets a precedent that propagates across teams for months.

Observability breaks these compounding cycles by making them visible before they cascade. The team that sees cost-per-request climbing for a specific tag can fix it before it becomes the new normal. The team that sees compression rates dropping can address the root cause before it propagates.

Start early. AI usage patterns calcify quickly. The workflows you build today become the architecture you're stuck with tomorrow. Instrumenting cost observability now is dramatically cheaper than retrofitting it after the invoices are already painful.

The paradox we described in our previous post resolves not through cheaper tokens but through better visibility. You can't compress what you can't measure. You can't attribute what you can't tag. You can't budget what you can't see.

And you can't afford not to see it.

Sources

- Edgee Documentation (Observability Features)

- Gartner (Cloud Waste Statistics)

- FinOps Foundation (State of FinOps)

- Edgee Blog: The AI Economic Paradox