Should Sam Altman Fear Token Compression Technology or Embrace It?

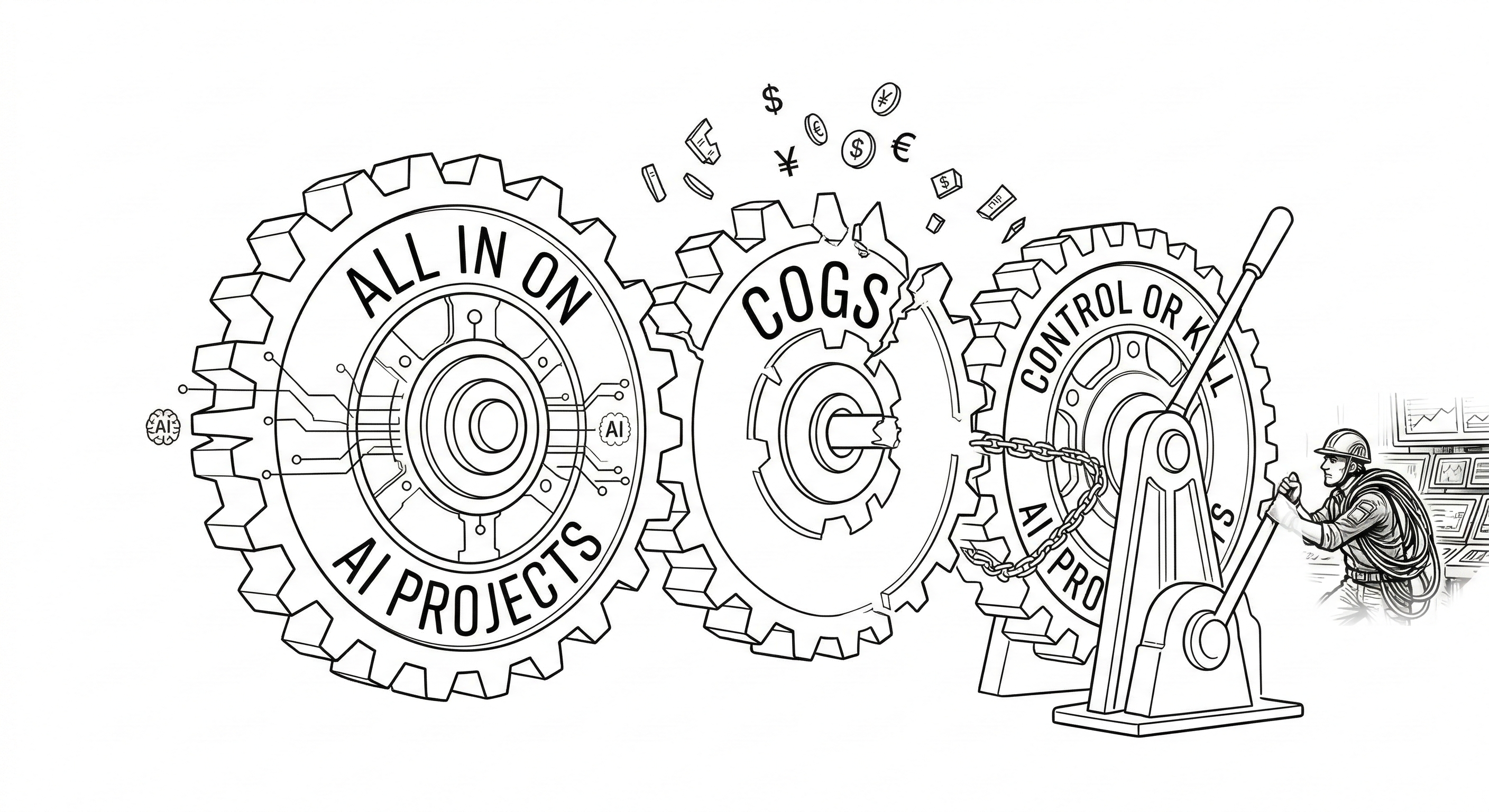

Right now, across thousands of companies, the same scene is playing out. An AI workflow that didn't exist 18 months ago is quietly consuming tokens by the millions. A product team has embedded a language model into a core process. An engineering team is running automated agents. A customer service platform has replaced human triage with AI. The technology is working. And the invoice is growing.

This is the reality of enterprise AI in 2025: a line item that didn't exist two years ago has become one of the fastest-growing costs on the P&L. EBITDA isn't improving yet. COGS are increasing significantly, driven directly by AI inference spend. Every new model deployment, every agentic workflow, every product feature powered by a language model adds to a cost base that compounds quietly until it lands on a quarterly review and suddenly becomes someone's problem.

The bill shock stories are already circulating in every engineering org. A CTO runs a one-day internal test on a new AI feature and wakes up to a $7,000 charge on the company card. A product team ships an AI-powered workflow without a token budget, and the OpenAI invoice at the end of the month comes in at $80,000, unforecasted, unexplained, and very much unbudgeted. These aren't edge cases. They are early warnings of what happens when AI consumption scales without a cost management layer underneath it. And most companies have barely started scaling.

The Great AI Pricing Illusion

Gartner puts enterprise generative AI spending at $37 billion in 2025, up from $11.5 billion in 2024. That's a 3.2× year-over-year jump, not gradual adoption, but a near-vertical acceleration. Meanwhile, the raw cost of running an inference call on most frontier models has dropped significantly compared to just two years ago. On paper, you'd expect enterprise bills to shrink as efficiency improves.

They haven't. They've exploded.

The raw pricing data makes the trend impossible to ignore. Since GPT-4 launched at $30 per million input tokens in early 2023, equivalent capability has fallen roughly 10 to 20 times in price by mid-2025. Budget models have moved even faster: Google's Flash dropped from $0.35 to $0.075 per million tokens, a 79% cut in under 12 months. And that's before accounting for the open-source effect, where models like Llama and Mistral put relentless downward pressure on what closed-model providers can charge. The pattern holds like clockwork: roughly 10x cheaper every 18 months.

So the cost curve is real. Inference is getting dramatically cheaper. And yet the enterprise bill keeps climbing. Why? Because companies aren't just doing more with AI. They're doing more with the best AI. Every time a developer swaps a cheaper model for a smarter one, every time a product team adds a new agentic workflow, every time a customer-facing chatbot gets an upgrade, the token bill grows. The savings from cheaper models are immediately reinvested into more powerful ones, more use cases, more volume.

The illusion of "AI getting cheaper" is real at the hardware level. But at the enterprise application layer, costs are climbing fast enough to create genuine budget shock.

The Hidden Risk in Your AI Budget

To understand where enterprise AI costs are heading, you need to understand the business model of the companies setting the price. And that business model has a structural problem that directly affects every company spending on AI today.

The major AI LLM providers are not profitable on their core inference business. They are, in the most polite terms, subsidized. By venture capital, by strategic investments from Microsoft and Amazon and Google, and by a Wall Street narrative that has been extraordinarily generous in accepting years of losses in exchange for market position. Cursor alleges that Anthropic and OpenAI are heavily subsidizing their AI coding tools — a $200/month Claude Code subscription could cost up to $5,000 in compute.

The numbers are staggering. Anthropic burned through $12 billion in a single quarter (July-September 2025), more than four times the entire cumulative losses Amazon recorded across its seven pre-profitability years before turning its first annual profit in 2003. OpenAI, for its part, is forecast to lose $14 billion in 2026 alone. These aren't rounding errors or growing pains. They are structural losses being absorbed by investors betting that market dominance today is worth the bleeding now.

What this means for enterprise buyers is straightforward: the prices you are paying today are not sustainable prices. They are subsidized prices. The 10x-every-18-months cost curve is not a law of physics. It is a consequence of subsidized competition, and subsidized competition doesn't last forever. At some point, whether driven by investor pressure, the end of the current funding cycle, or the simple reality that no business can indefinitely sell below cost, the labs will need to price for sustainability. When that happens, the direction of travel reverses. Prices don't just stop falling. They rise, and they rise significantly.

For companies that have built workflows consuming millions of tokens per day on the assumption that tokens will keep getting cheaper, that moment will be a reckoning. And the pressure won't come from price alone. By the time repricing hits, AI usage inside most organizations will be orders of magnitude higher than it is today. More teams, more workflows, more automated agents running around the clock. The bill shock won't just be about a higher price per token. It will be a higher price per token multiplied by a consumption base that has grown 10x. That is the double squeeze that most finance teams are not modeling for today.

The question isn't if this repricing happens. It's whether your company will have built the efficiency layer before it does.

The New Optimization Imperative

This is where the conversation gets interesting, and where it becomes less about fear and more about strategy.

Companies that are thoughtful about their AI architecture today are already solving for tomorrow's cost environment. The playbook has several components: token compression to reduce the size of context and prompts without losing semantic meaning, output limiting to get the information you need without the verbose padding that language models love to generate, and intelligent model routing to match the complexity of a given task to the appropriate, most cost-effective model.

The logic is straightforward. Not every task needs GPT-4o or Claude Opus. A simple classification call, a short summarization, a yes/no routing decision: these can be handled by a smaller, faster, and dramatically cheaper model. A complex reasoning task, a nuanced synthesis, a high-stakes customer interaction: that's where you route to the frontier. The same intelligence that makes large language models powerful can be applied about large language models, deciding in real time which tool best fits the job.

Edgee, along with a growing ecosystem of AI infrastructure companies, is building exactly this layer. The goal isn't to avoid using powerful AI. It's to use it precisely, without waste, so that the budget freed up by efficiency can be reinvested in the places where intelligence genuinely creates value.

What This Means for Sam Altman

So, should OpenAI's CEO fear the rise of token compression technology?

The instinctive answer from inside an AI lab might be yes. If every enterprise customer is suddenly consuming 30% fewer tokens to accomplish the same tasks, that's a 30% revenue hit. At the scale OpenAI operates, that's a number worth worrying about.

But the more interesting answer, and arguably the correct strategic answer, is no. And understanding why matters just as much for enterprise buyers as it does for Sam Altman.

Token compression and intelligent routing don't reduce the value extracted from AI. They reduce the friction that prevents wider deployment. Right now, AI bill shock is one of the most underreported barriers to enterprise adoption. Finance teams at mid-sized companies are looking at their first year of AI infrastructure costs and getting cold feet. Product roadmaps are being scoped down because the CFO flagged the token spend in the quarterly review. Entire categories of use cases never get built because the unit economics don't work at current, unoptimized consumption levels. These aren't hypothetical scenarios; they're happening at scale today.

Make AI 40% cheaper to run through optimization, and you don't get 40% less usage. You get 40% more use cases justified, more teams onboarded, more workflows deployed. The addressable market expands. More problems become worth solving with AI, and more of those problems eventually route up to the most capable, premium-priced models. Token compression doesn't shrink the market. It grows it.

This is how transformative technologies scale. Not by being expensive and exclusive, but by becoming efficient enough that the question shifts from "can we afford to use AI here?" to "can we afford not to?"

Conclusion: Build the Efficiency Layer Now

It's tempting, when AI budgets are growing fast, to ship quickly and optimize later.

But "later" has a deadline. When the subsidized pricing era ends and token costs normalize upward, the companies with an efficiency layer already in place will barely feel the impact. The companies that built on the assumption of ever-cheaper tokens will face a simultaneous shock: higher prices hitting a much larger usage base, with no infrastructure to absorb it.

Token compression, LLM intelligent routing, and AI cost observability are not niche engineering concerns. They are the foundation of a sustainable AI strategy. They make it possible to expand AI access inside an organization without every new use case becoming a budget negotiation. They give finance teams the visibility to approve more AI investment, not less. And they ensure that when the most powerful models are needed, the budget is there to use them.

For enterprises, the message is clear: build the optimization layer now, before the subsidies end. The companies that treat token efficiency as a strategic capability, not an afterthought, will be the ones best positioned when the pricing environment shifts.

For Sam Altman and the rest of the frontier AI leadership: the companies helping enterprises consume tokens more intelligently are doing your distribution work for you. The more affordable AI becomes to deploy at scale, the larger the market for the models only you can build.

That's not something to fear. That's something to accelerate.