A Cost and Control Layer for Production LLM Systems

On February 12th, we launched Edgee AI Gateway.

LLMs have transformed how software systems are built. However, once usage reaches production scale, architectural and economic constraints become central:

- Token consumption compounds quickly

- Cost variability increases with context depth

- Multi-model strategies introduce operational complexity

- Observability fragments across providers

What begins as experimentation evolves into infrastructure.

As discussed in our article on The AI Economic Paradox, increasing model capability simultaneously increases economic complexity.

At the same time, traditional monitoring approaches struggle to explain where AI spend actually comes from. In AI Cost Observability: The Missing Layer, we explore why production AI systems require a dedicated visibility layer connecting usage, models, and cost.

Edgee AI Gateway introduces structure, governance, and cost visibility into that layer.

Agentic Token Compression

Edgee's Agentic Token Compression optimizes prompts before they reach foundation models.

By reducing redundant context while preserving instruction fidelity, input tokens can be reduced by up to 50% for production workloads such as RAG systems and agent-based architectures.

Optimization is measurable and exposed directly in the dashboard.

For a technical explanation, see our deep dive on how Agentic Token Compression works.

A Unified Operational Dashboard

Cost optimization does not scale without structured visibility.

Edgee provides a unified operational dashboard consolidating LLM usage across models and providers.

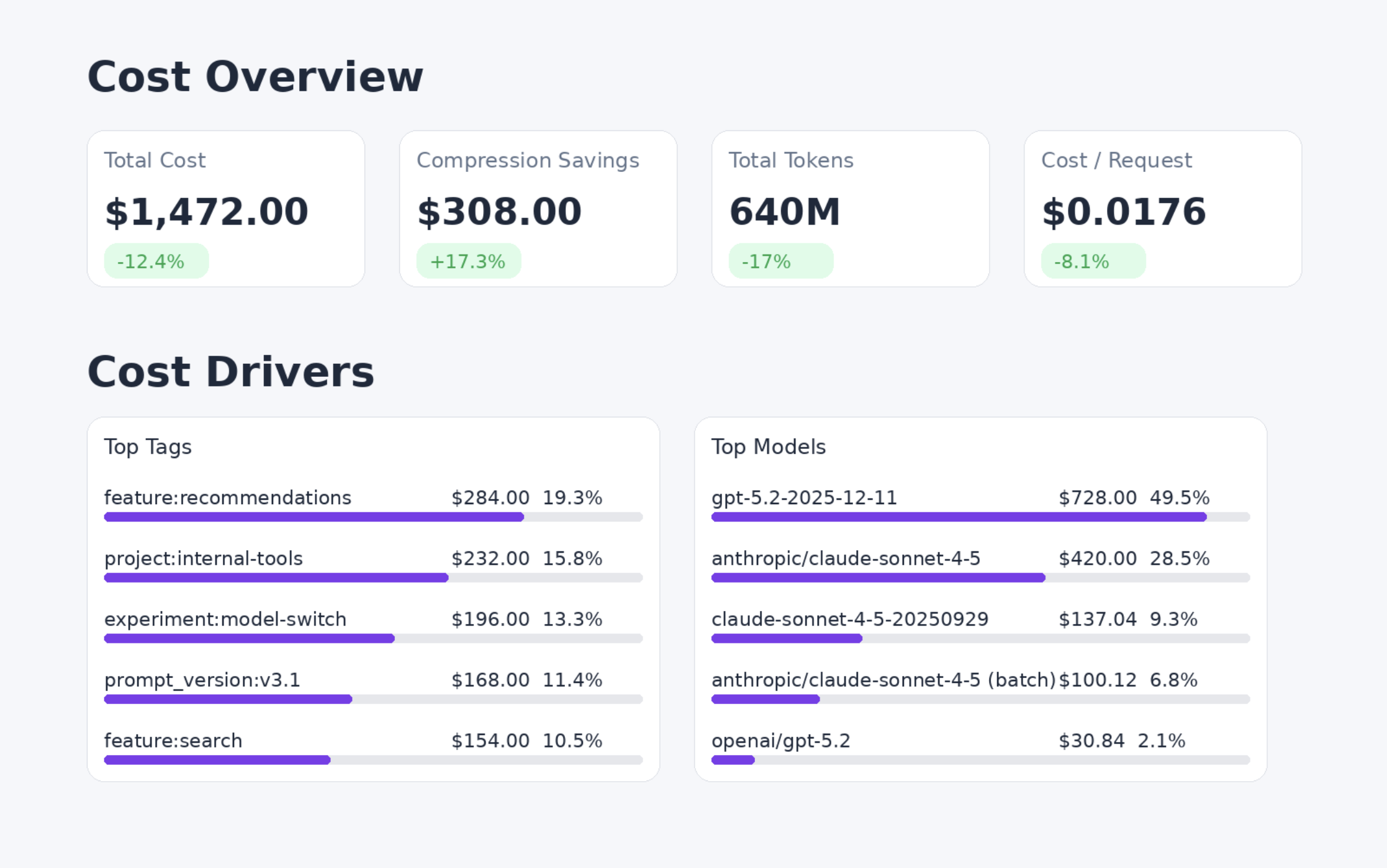

Engineering teams can immediately understand usage and spend through key metrics such as:

- Total cost

- Compression savings

- Token volume

- Cost per request

- Request volume

Usage can be analyzed across models, providers, and tags, helping teams understand which workloads, features, or environments drive spend.

Metrics can be explored over time and segmented across multiple dimensions — replacing fragmented provider dashboards with a single operational layer for monitoring and optimization.

Cost becomes a first-class operational signal in your AI stack.

Token Compression: Pre-Inference Optimization and Control

Edgee introduces Token Compression, a programmable layer that runs before requests are sent to LLM providers.

They enable context optimization, pruning, token reduction ahead of inference without requiring changes to application integrations.

Agentic Token Compression is the first implementation.

The Coding Assinstant Token Compression will be released shortly, optimized for Claude-based workflows, followed by integrations for OpenCode and Cursor.

Edge Tools

Edge Tools centralize tool execution at the gateway layer.

Tools are defined once, executed alongside inference, and governed through centralized policy controls with full traceability.

This reduces distributed orchestration logic while preserving auditability and operational control.

Edgee AI Gateway Is Live

As LLM usage moves from experimentation to production infrastructure, cost control, observability, and model governance become core engineering concerns. Production AI systems require more than model access — they require visibility, control, and predictable economics.

Edgee is built to provide that operational layer.