Edgee isn’t just another proxy. It’s an edge-native AI Gateway that cuts LLM costs by up to 50% through intelligent token compression. Combined with edge computing, intelligent routing, and zero-trust security, it’s purpose-built for production AI workloads at scale.Documentation Index

Fetch the complete documentation index at: https://www.edgee.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Token Compression

When enabled, token compression runs at the edge before your request reaches LLM providers. This can reduce input tokens by up to 50% for common workloads like RAG pipelines, long document analysis, and multi-turn conversations.Up to 50% token reduction

Lower latency with smaller payloads

Real-time savings tracking

How It Works

Token compression analyzes your prompt structure to:- Remove redundant context without losing semantic meaning

- Optimize RAG document formatting for better compression ratios

- Preserve critical instructions and few-shot examples

- Maintain output quality while reducing input costs

Multiple Compression Engines

Edgee offers multiple compression engines optimized for different workloads:

Claude Token Compression

Provides fully lossless compression for Claude Code.

Codex Token Compression

Provides fully lossless compression for Codex.

Agentic Token Compression

Combines semantic analysis, tool compression, and caching, works with any model.

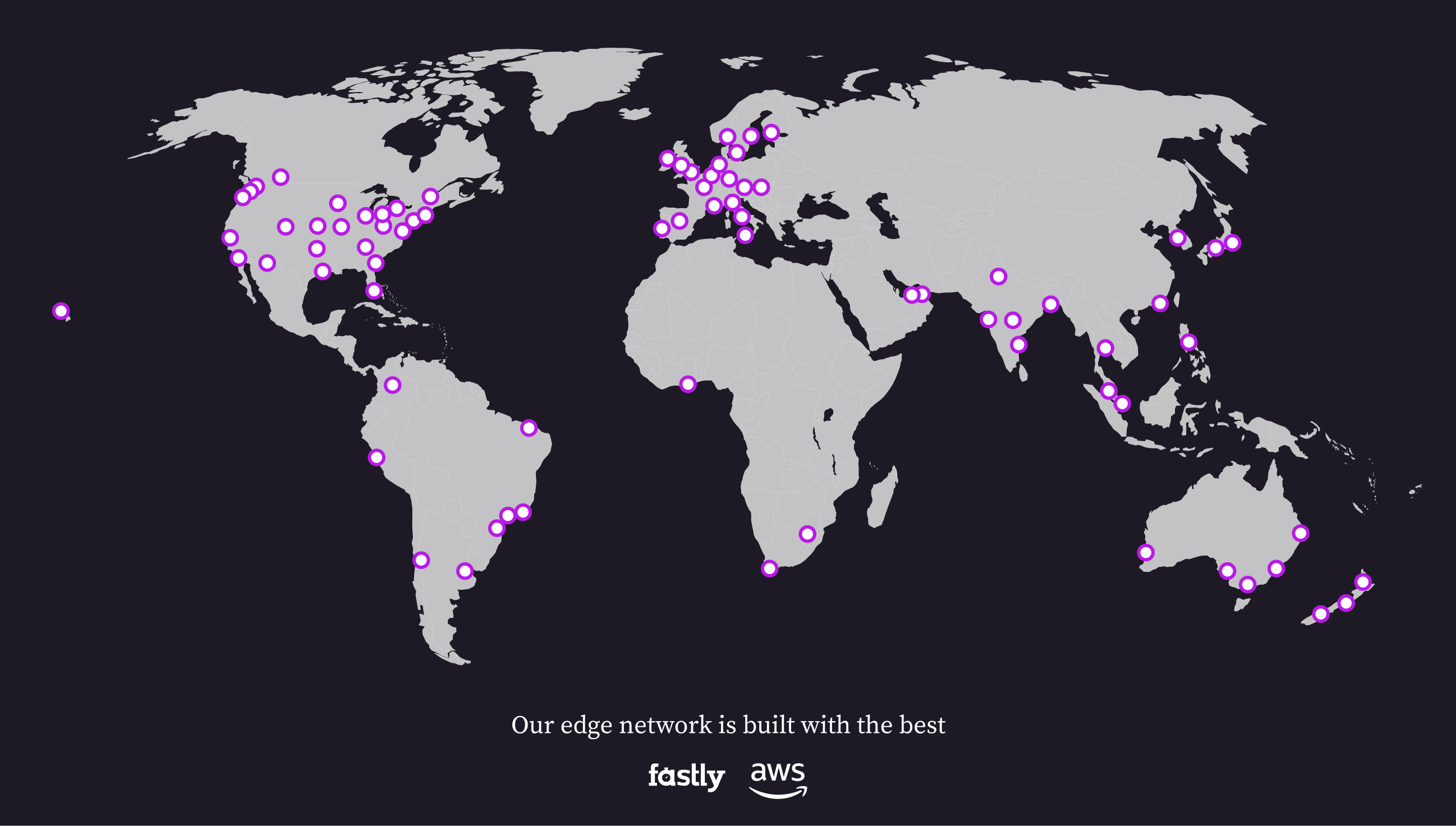

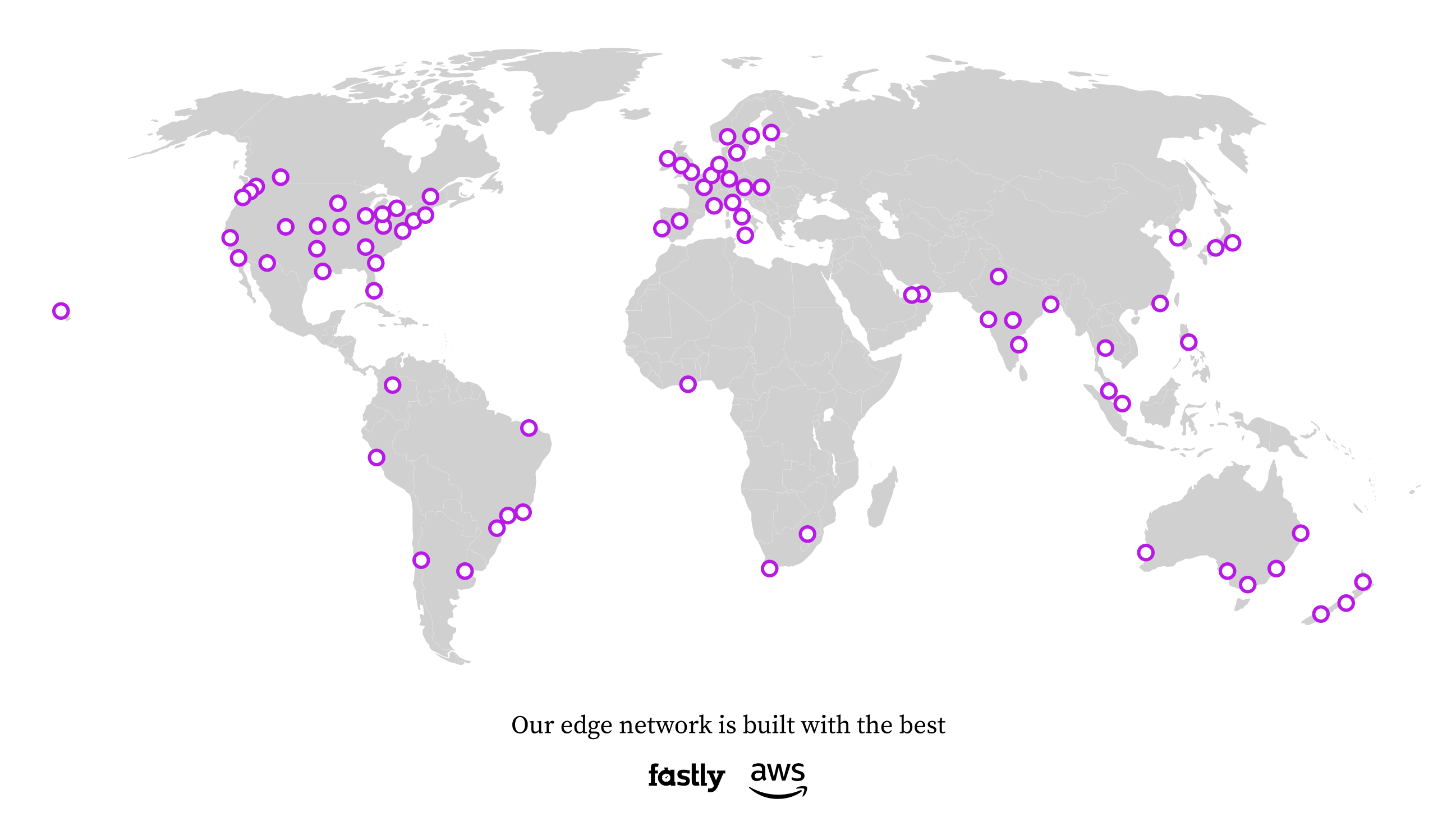

Edge-First Architecture

Traditional AI gateways route all traffic through centralized servers. Edgee processes requests at the edge, closest to your application or user.< 10ms processing overhead

100+ edge locations

Privacy controls built-in

How It Works

Global Network

Powered by Fastly and AWS, our network spans six continents:

Requests are automatically routed to the nearest PoP via Anycast. No configuration needed.